Ecommerce websites are a great source of product information.

This includes product details, pricing, and data on deals and sales.

You could use this data to make better decisions in your purchasing process, for market research or even competitor research. Some companies gather prices to make better investment decisions for themselves or clients. Finally, some websites use updated web scraped Ecommerce data for price comparison blogs/reports.

Whatever your goal is, here’s how to scrape data from any ecommerce site with a free web scraper. You will then be able to download the data as an Excel sheet.

Web Scraping for Free

In order to scrape large volumes of data, you will need a web scraper.

For this example, we will use ParseHub, a free and powerful web scraper that can scrape any website. Make sure to download and install ParseHub before we get started.

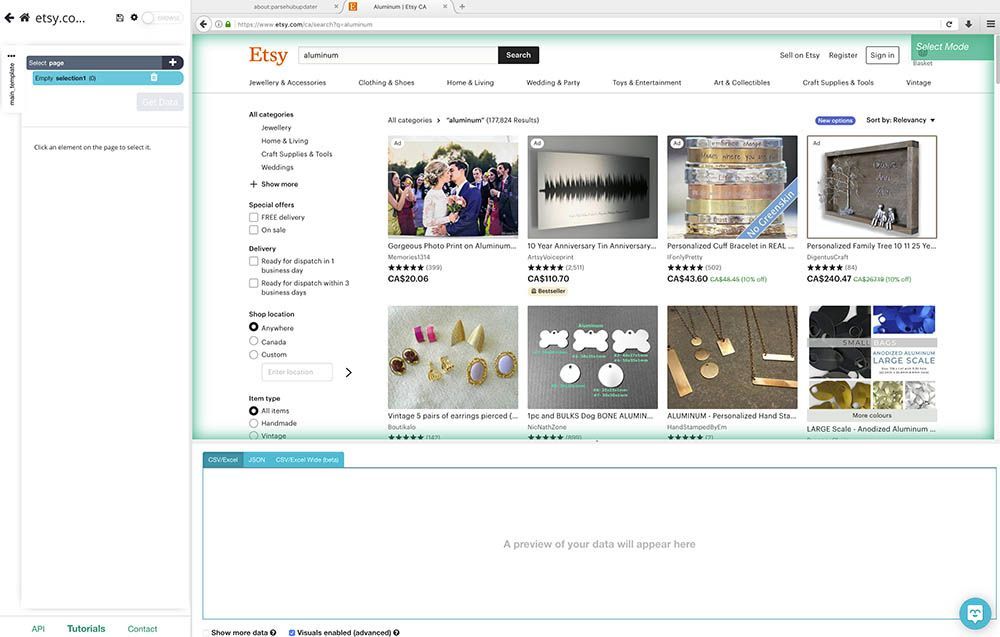

For this example, we will scrape data from Etsy. More specifically, data from Etsy’s search result page for “aluminum”.

Scraping an ecommerce website

Now, let’s get scraping.

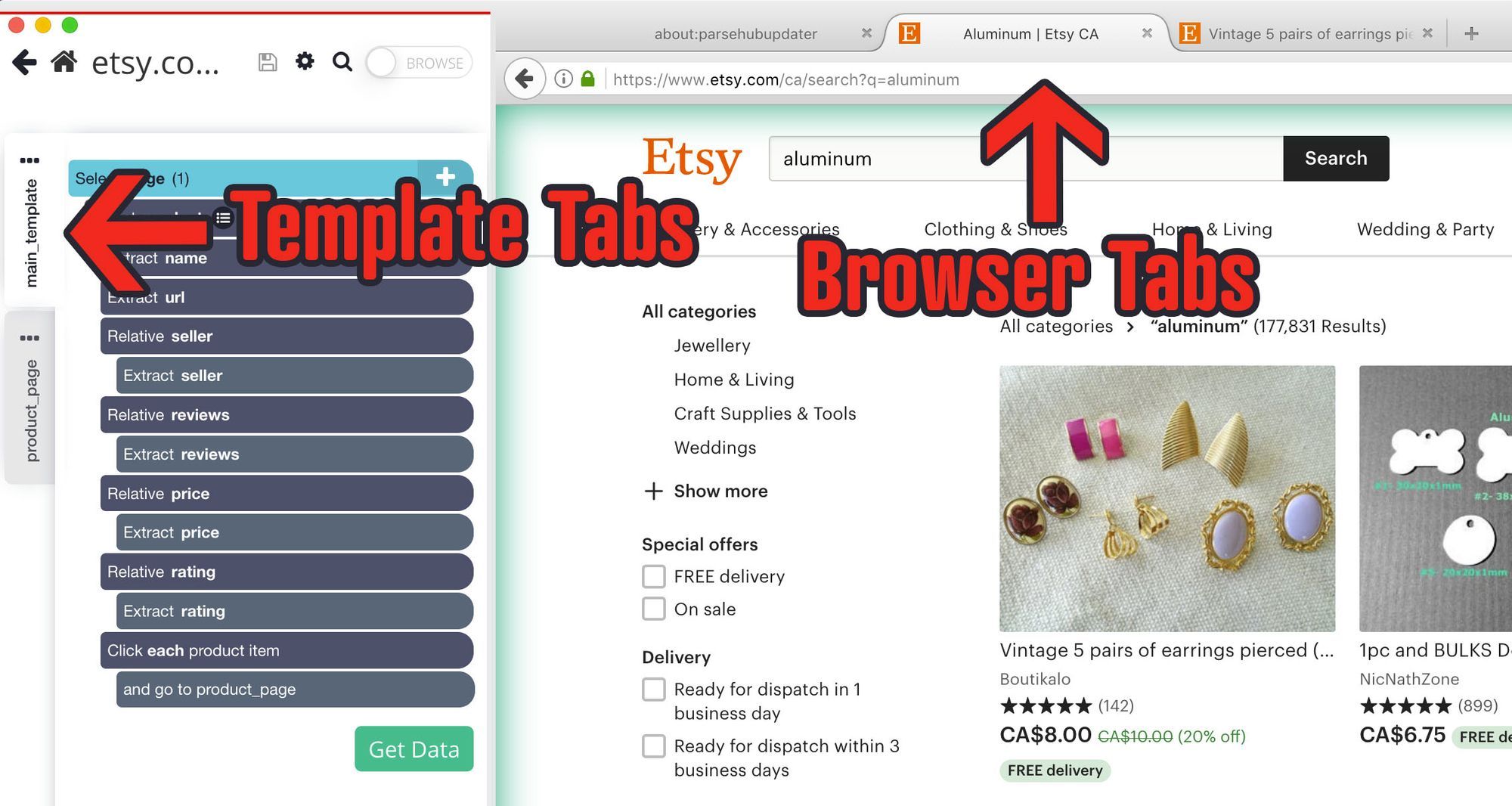

- First, open ParseHub and click on “new project”. Then, enter the URL you will be scraping. The page will be rendered inside the app.

- Once the page is rendered, make your first selection by clicking on the name of the first product on the page. It will be highlighted in green to indicate that it’s been selected.

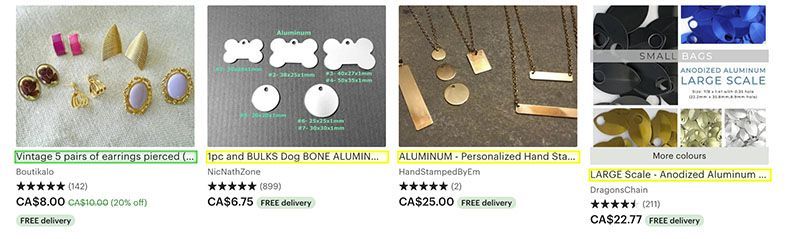

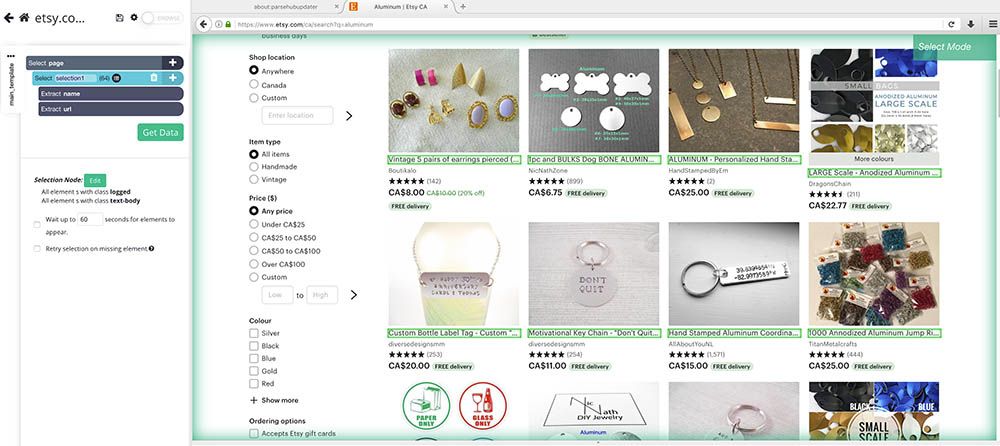

- The rest of the product names on the page will be highlighted in yellow. Click on the second one on the list to select them all. In the right sidebar, rename your selection to product. ParseHub is now extracting each product’s name and URL.

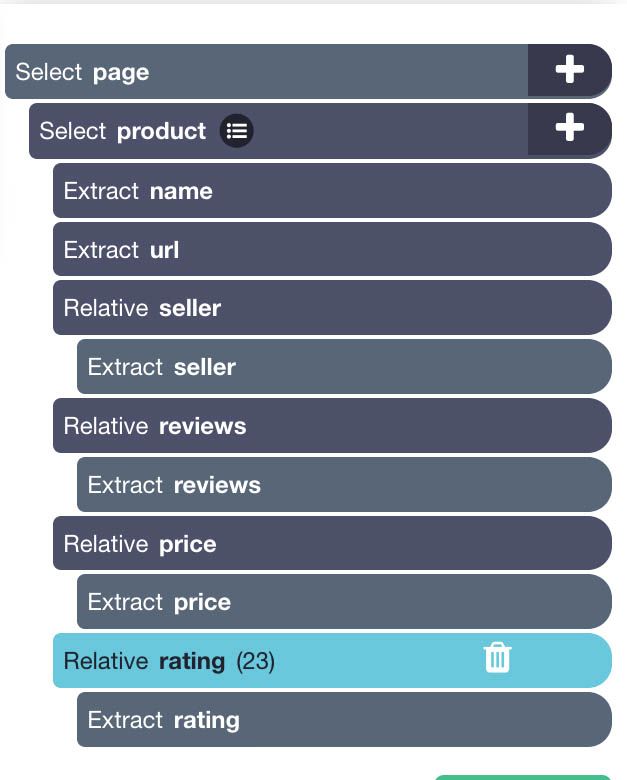

- Now it’s time to extract more data. Use the PLUS(+) sign next to the product selection and choose the Relative Select command.

- Using this command, click on the name of the first product on the page and then on the seller name. An arrow will appear to indicate the connection you’re creating. Rename this selection to seller.

- Now repeat steps 4-5 to add additional data such as the product’s price, rating and number of reviews. Your project should look like this:

Scraping additional details

You might want to extract additional data from each products’ details page. We will now setup ParseHub to also click on each product and extract additional data.

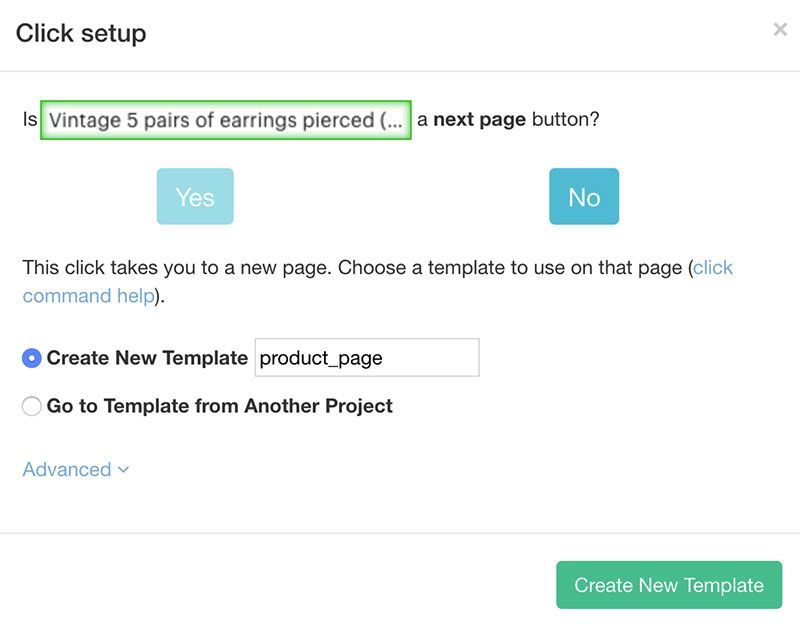

- Click on the PLUS(+) sign next to your product selection and choose the Click command.

- A pop-up will appear asking you if this a “next page link”. Click on “No” and choose “Create New Template”, name it “product_page” and click on the “Create New Template” button.

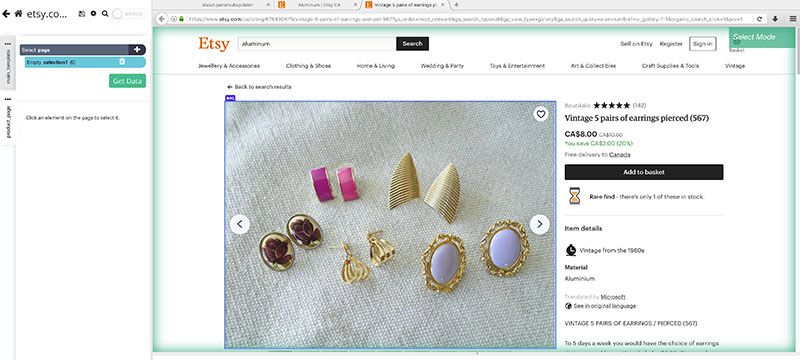

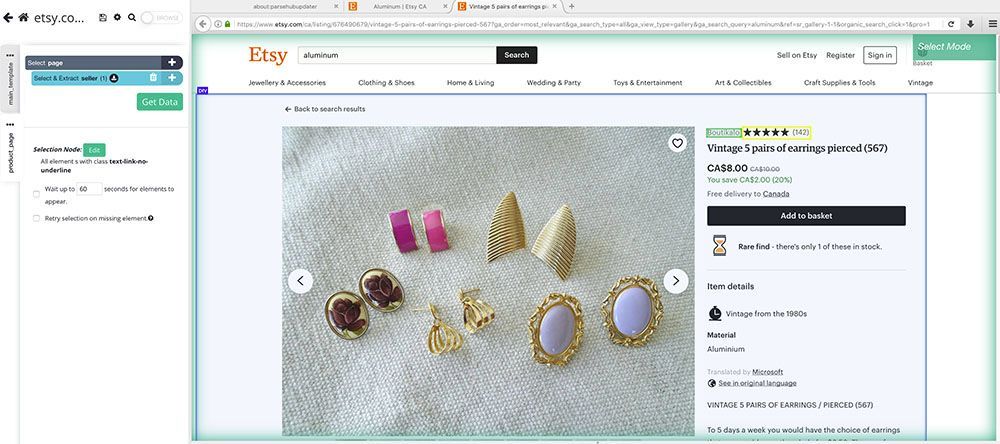

- A new browser tab will open inside of ParseHub and the first product page on the list will be rendered.

- A select command will be created automatically. You can now click on any element on the page that you would like to extract. In this case, we will extract the seller name and seller URL. Rename your selection accordingly.

- Using the PLUS(+) sign next to the page selection, you can add additional select commands to extract additional data.

Adding Pagination

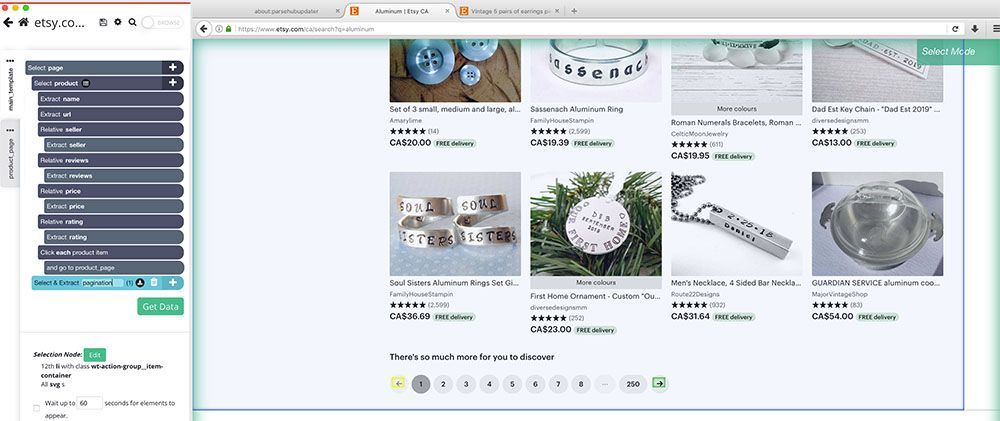

Now, we will setup ParseHub to navigate to further pages of search results and extract more data.

- First, use the browser and template tabs to return to the main template and the search results page.

- Use the PLUS(+) sign next to the page selection and choose the select command.

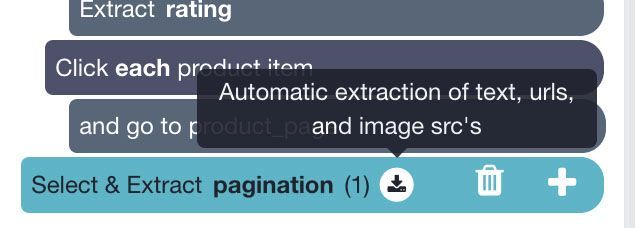

- Using this command, scroll all the way down to the bottom of the page and click on the “next page” link. Rename your selection to pagination.

- Click on the icon next to your pagination selection to expand it.

- Delete both extract command under this selection.

- Use the PLUS(+) sign next to your pagination selection and choose the Click command.

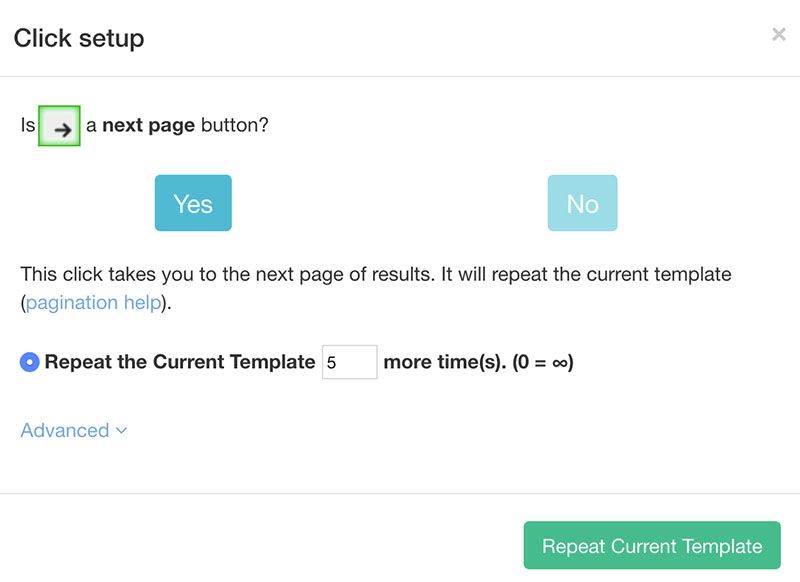

- A pop-up will appear asking you if this a next page link. Click on Yes and input the number of times you’d like to repeat this process. In this case, we will repeat it 5 times.

Running your scrape

It’s now time to run your scrape and extract your data as an Excel sheet.

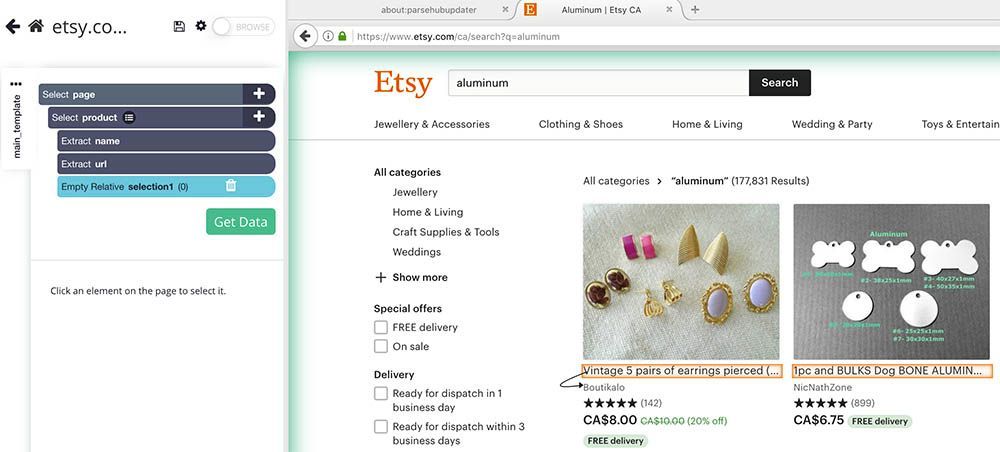

To do this, click on the green “Get Data” button on the left sidebar. Here you will be able to run, test, or schedule your web scraping project.

In this case, we will run it right away.

Closing Thoughts

Your results should look similar to this if you followed our guide correctly:

Once the scrape is complete, you will be able to download it as a CSV/Excel or JSON file.

As this is a revised guide for 2023, and websites change periodically, if you run into any issues during your project, feel free to contact our live support team and we will be happy to assist you.

Happy scraping!