There are many ways to grow an email list nowadays.

One of the fastest methods to do so involves web scraping. With the help of a free web scraper and by carefully selecting your lead sources, you can quickly build a high-quality email list.

You can then use this list for email marketing efforts or use it as a custom audience in Google or Facebook Ads. Also, many companies web scrape email lists for their prospecting or cold email outreach.

Whatever your goal may be, here’s how to scrape email addresses from any website into a convenient spreadsheet.

Email Scraping: A few considerations

Before we get scraping, there are a few things you must keep in mind before you get started. After all, cold emailing a list of scraped emails might not be the best approach to grow your business.

Here are some considerations to make before starting:

- Is the source of emails you’ll be scraping legitimate? Have these addresses been made public by the users or have they been published against their consent? Are these real, high-quality email addresses?

- How will you use this email list? Do you plan to blast this list with “spammy” messages to see who bites? Or are you planning to use this list to build legitimate connections with your potential customers? Furthermore, you could use this list to build target audiences for Google Ads or Facebook Ads.

- When dealing with scraped email addresses, we recommend checking your local laws regarding spamming and what you are allowed to do with the emails you’ve collected.

- Finally, you should clean the list to reduce your bounce rate and lower the chances of getting blacklisted or going into spam.

Now that you have figured out these factors, let’s get into how to scrape email addresses from any website.

Getting Started

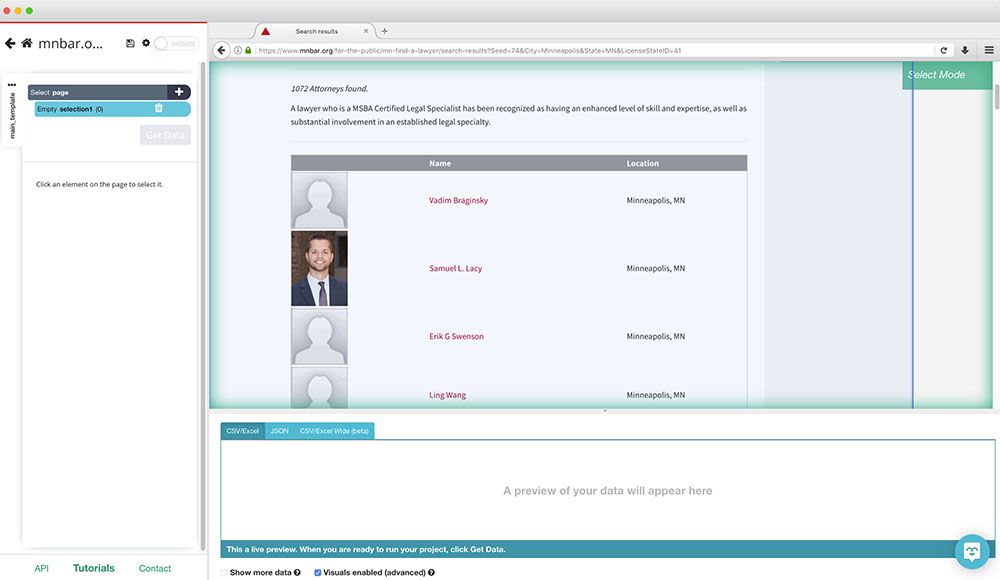

In order to get started, we’ll need a webpage with email addresses we’d want to scrape. In this case, we will scrape the Minnesota State Bar Association’s website for email addresses of their registered attorneys.

As you may be able to tell, this site lists the said attorneys with a link to their profile, where you can see their email address once you click on the email button. We will have to set up our scraper to click on each profile and extract their email.

Next, you will need a web scraper that can scrape emails from any website. For this example, we will download and install ParseHub, a free and powerful web scraper that works with any website.

Scraping Email Addresses

Now it’s time to get scraping.

- Open ParseHub and click on “New Project”. Then enter the URL of the page you will want to scrape. ParseHub will now render the page inside the app.

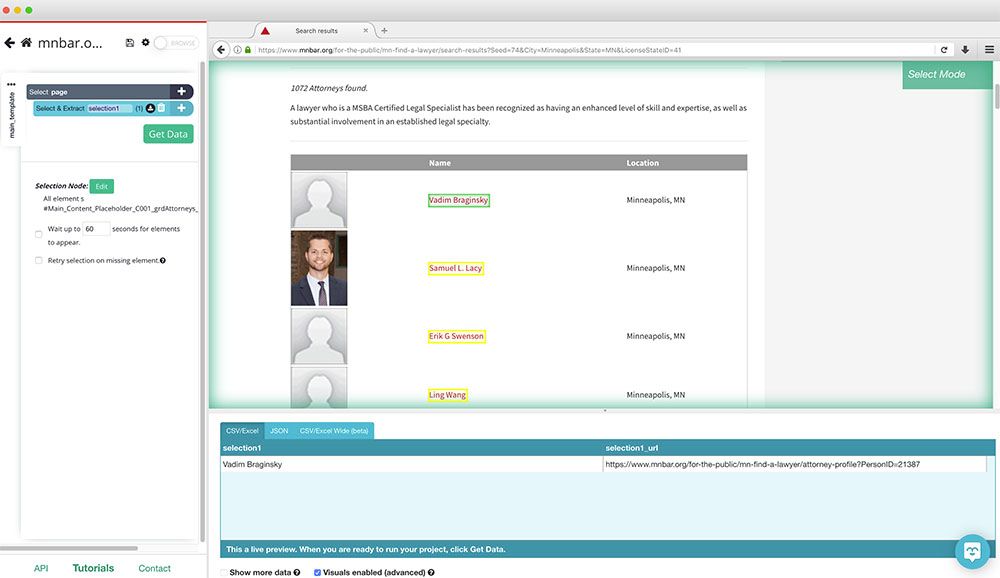

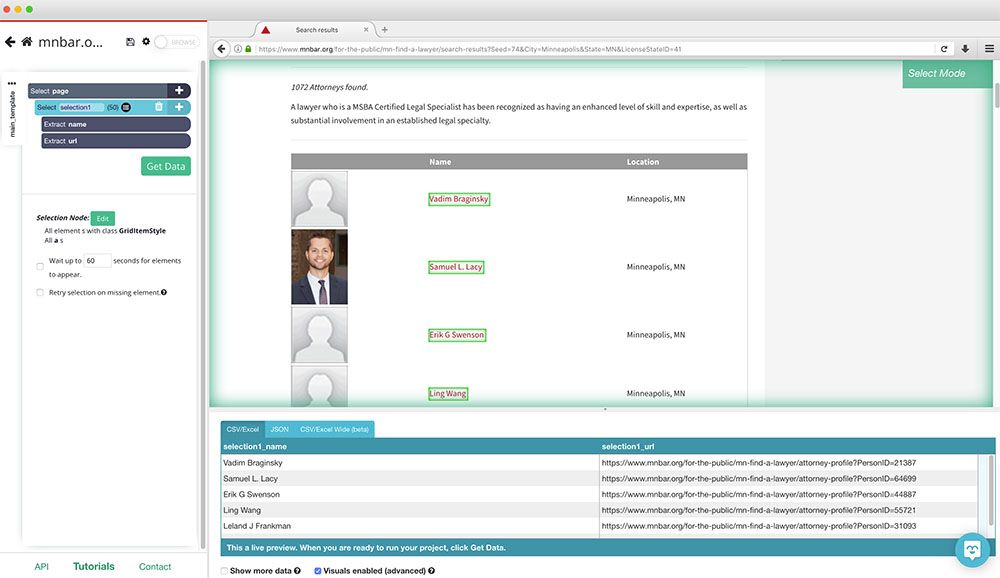

- Start by clicking on the first name on the list. It will be highlighted in green to indicate that it has been selected.

- The rest of the names on the list will be highlighted in yellow. Click on the second one on the list to select them all.

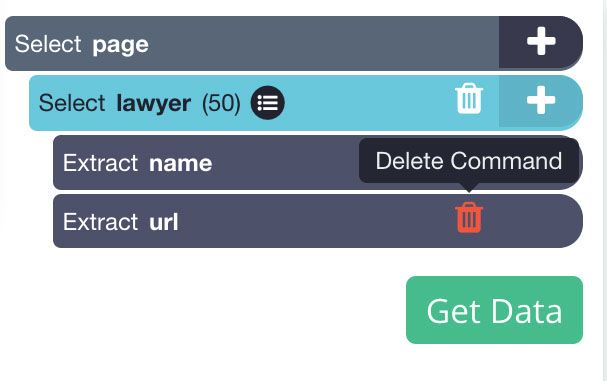

- On the left sidebar, rename your selection to lawyer.

- Next, remove the URL extraction under your lawyer selection, since we are not interested in pulling the profile URL in this case.

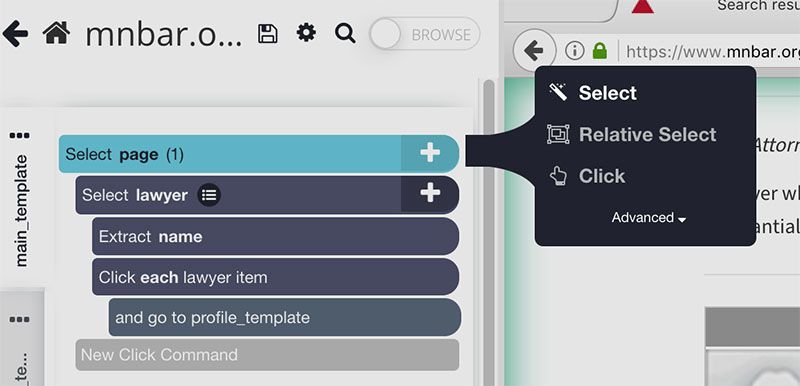

- Now, click on the PLUS(+) icon next to the lawyer selection and choose the "Click" command.

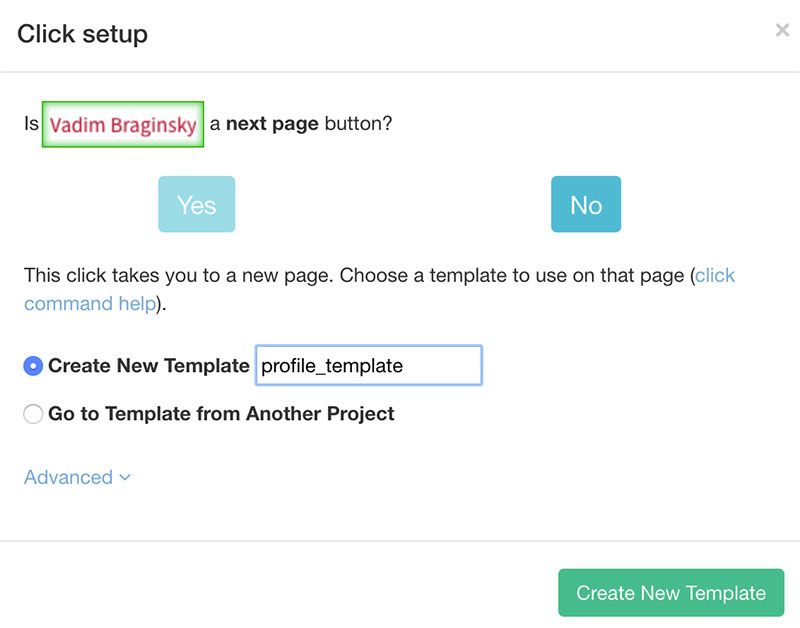

- A pop-up will appear asking you if this a “next page” command. Click on “No” and next to Create New Template enter the name profile_template (or something relevant). Then click on the Create New Template button.

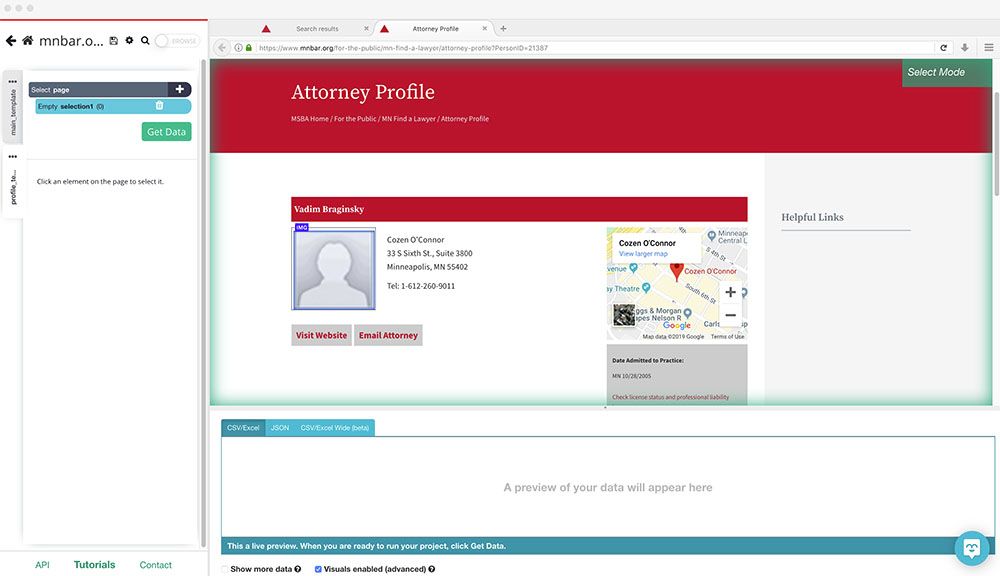

- ParseHub will now open a new tab and render the profile page for the first name on the list. Here you can make your first selection for data to extract from this page.

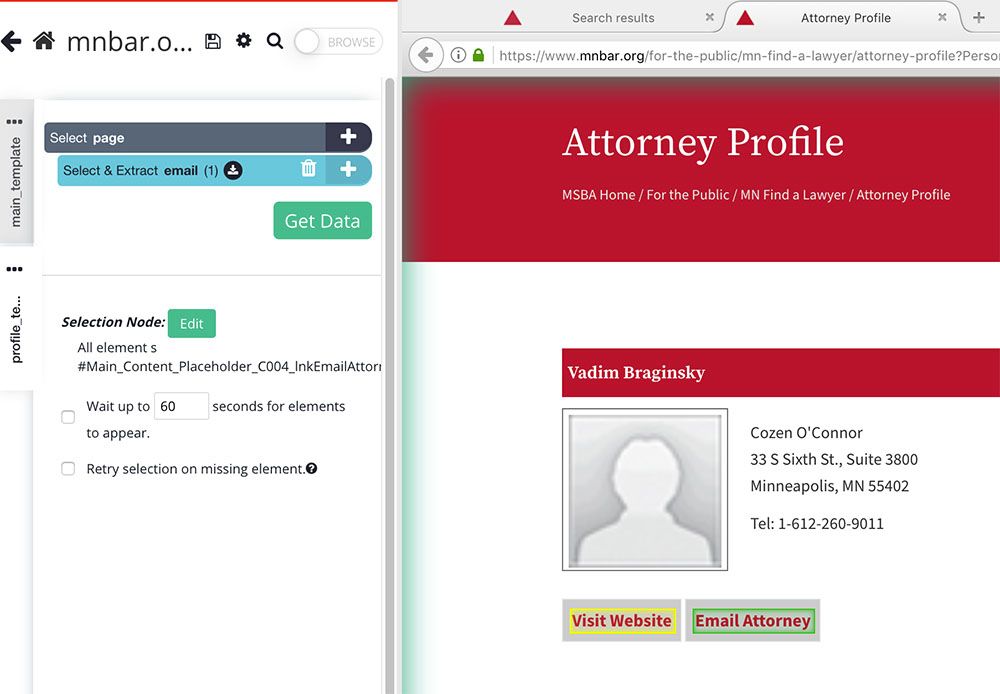

- First, click on the “Email Attorney” button to select it. Rename your new selection to email.

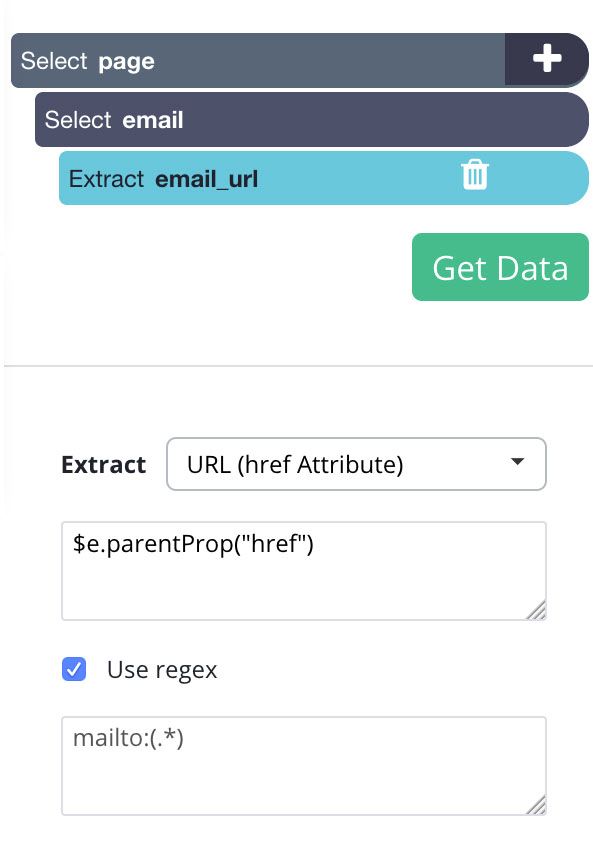

- You will notice that the email being pulled starts with “mailto:”. We’ll set up ParseHub to clean up the address before it extracts it.

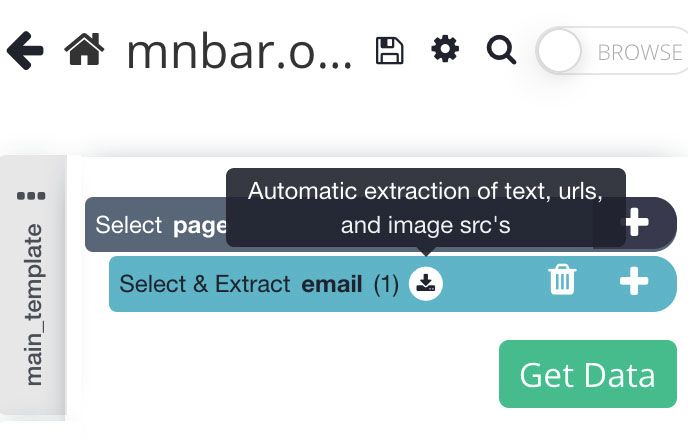

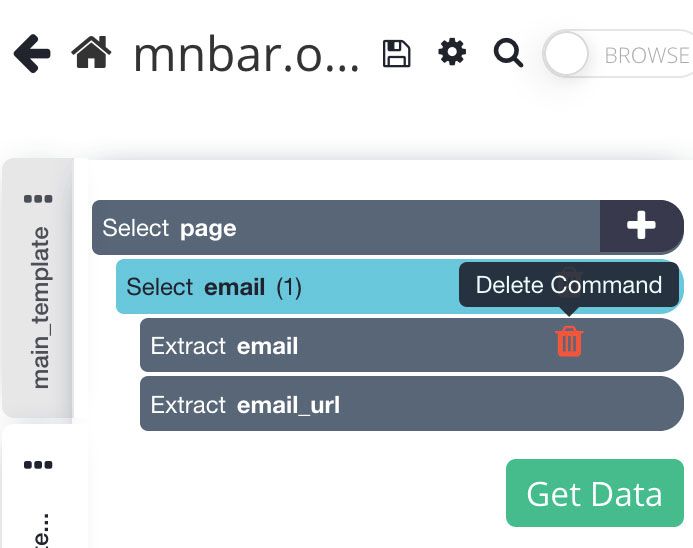

- To do this, expand your email selection by clicking on the icon next to it.

- First, remove the “extract email” command since this is just extracting the text inside the button.

- Now select the email_url extraction and tick the “Use Regex” box. In the textbox under it, enter the following regex code: mailto:(.*)

- Now, you can add additional “select” commands under the page selection to also extract the lawyer’s address, phone number and more. However, for this example, we will only focus on their email addresses.

Pagination

Now, ParseHub is setup to extract the name and email of every lawyer in the first page of results.

We will now setup ParseHub to extract data from additional pages of results.

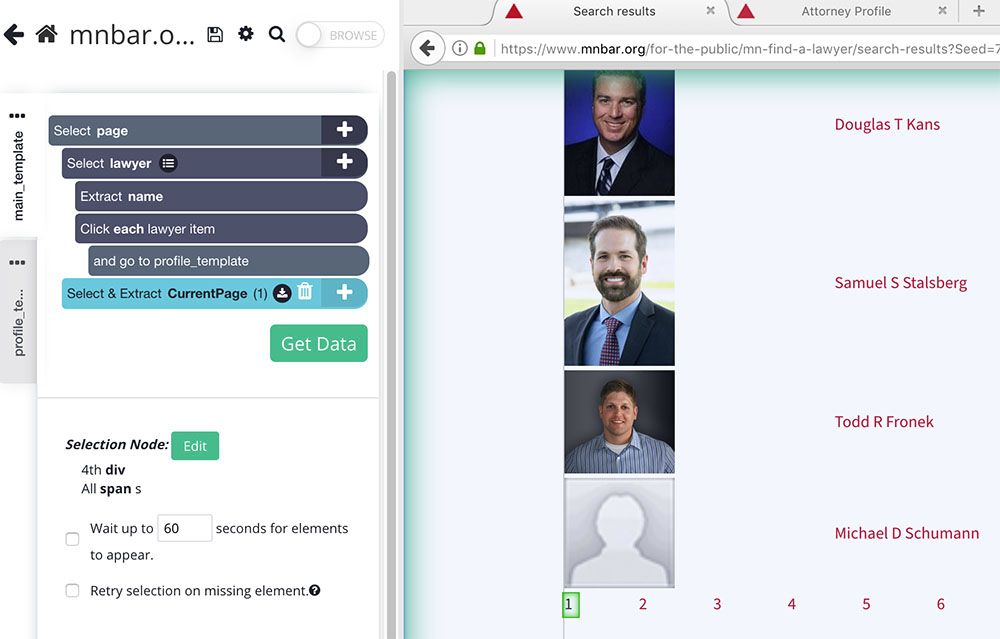

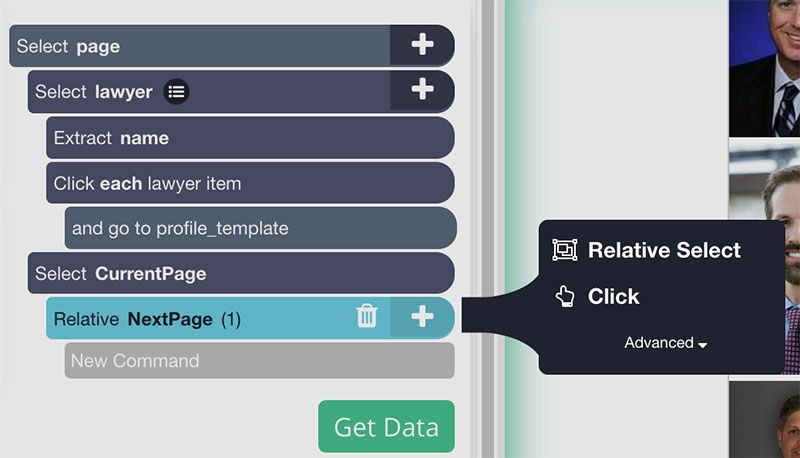

- In ParseHub, use the tabs at the top to return to the result list page. Then on the left sidebar, click on your main_template tab.

- Use the PLUS(+) sign next to the page selection and choose the “Select” command.

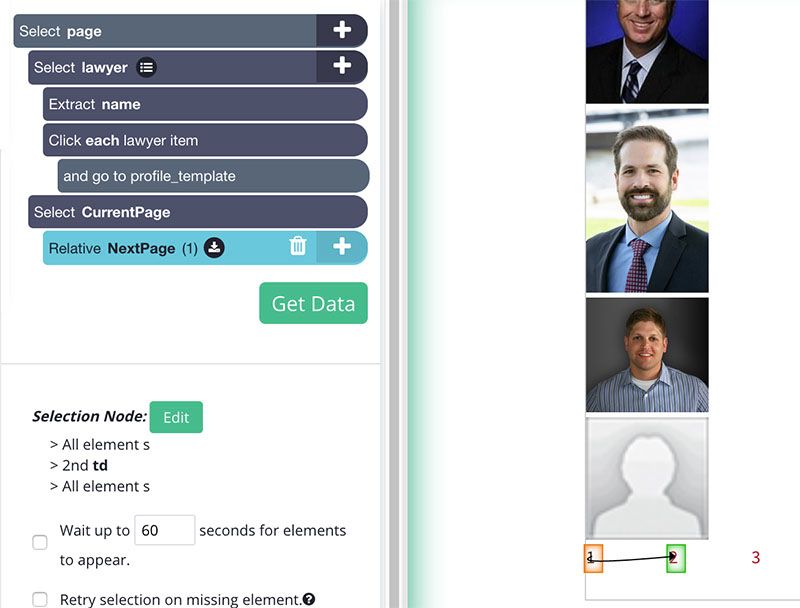

- Scroll all the way down to the bottom of the page and click on the current page number (since this specific page does not have a specific “next page” link). Rename this selection to CurrentPage.

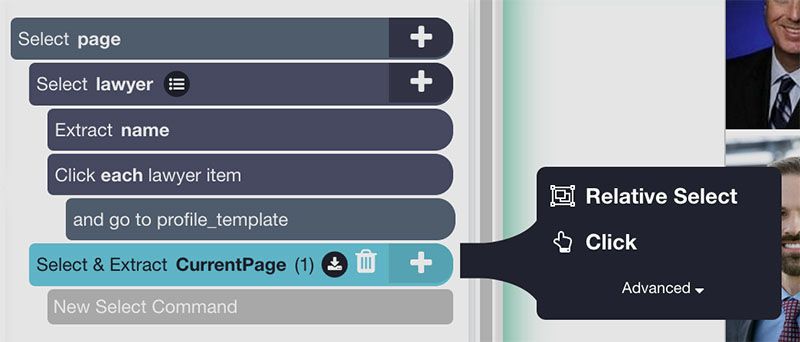

- Use the PLUS(+) button next to the CurrentPage command and choose the Relative Select command.

- Using the Relative Select command, click on the current page number and then on the 2nd-page link. You will see an arrow that will establish the connection between these two elements. Rename your new Relative Select command to NextPage.

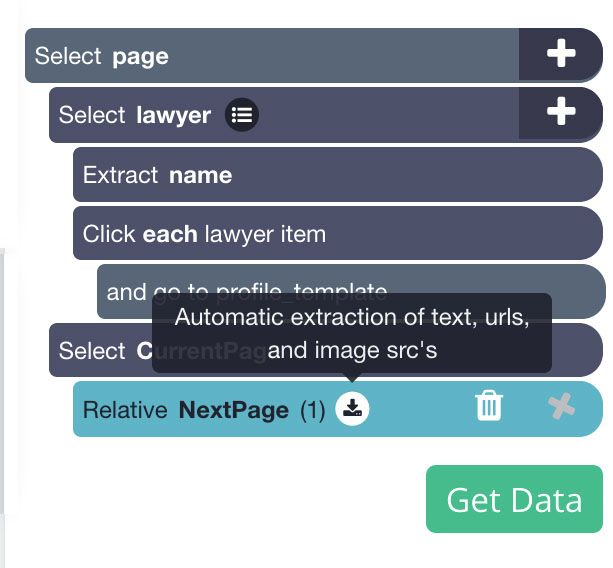

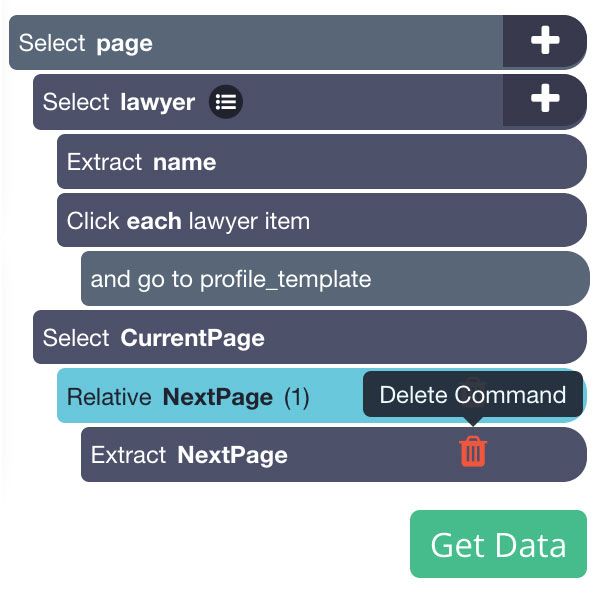

- Expand your NextPage selection and remove its extract command.

- Now click on the PLUS(+) sign next to the NextPage selection and add a Click command.

- A pop-up will appear asking you if this is a “next page” button. Click on “Yes” and enter the number of times you’d like to repeat this process. For this example, we will repeat it 10 times.

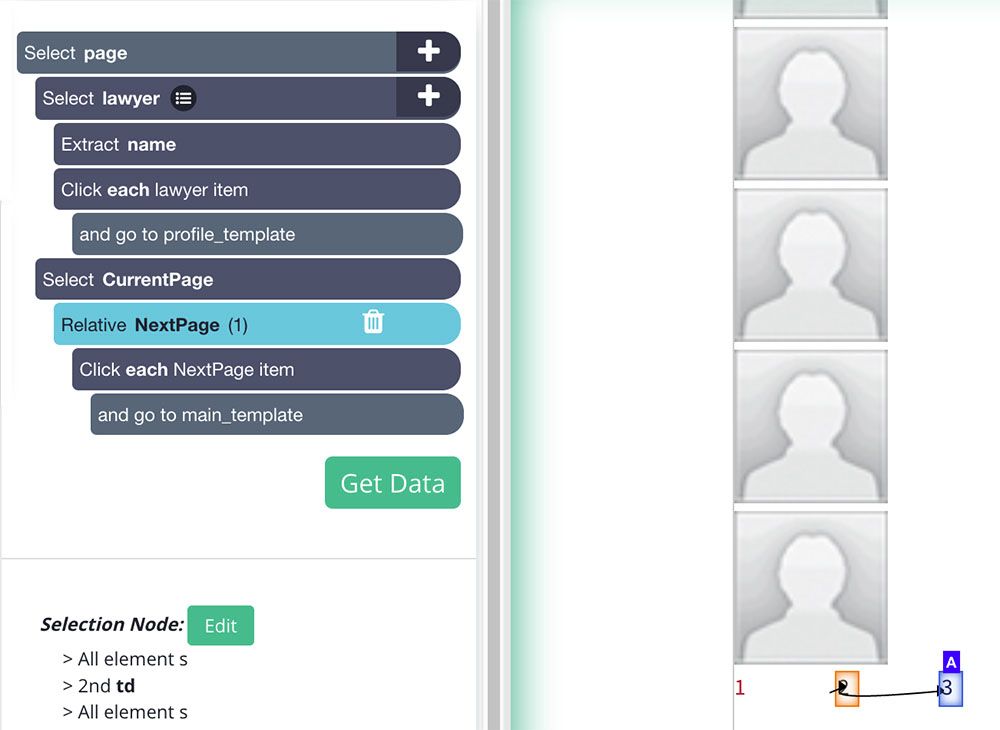

- ParseHub will now render the second page of the search results list. Scroll all the way to the bottom to make sure your Relative Select is working correctly.

- To fix this, select your NextPage selection, click on the “2” at the bottom of the page and then on the “3” next to it. Your Relative Select command should now work correctly.

Running Your Scrape

You are now ready to run your scrape and extract the data you have selected.

Start by clicking on the green Get Data button on the left sidebar. Here you can choose to test your scrape job, run it or schedule it for later.

For larger scrape jobs, we recommend that you do a test run before submitting your scrape job. In this case, we will just run it right away.

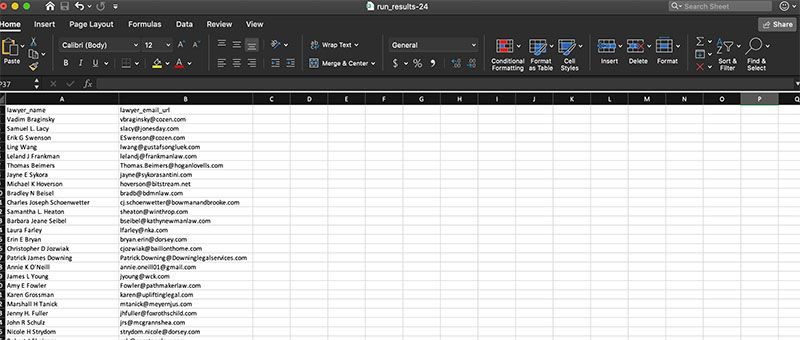

ParseHub will now go and scrape all the data you have selected. Once the data has been collected, you will be notified via email and you’ll be able to download your scrape as an Excel spreadsheet or JSON file.

Closing Thoughts

You now know how to scrape email addresses from any website.

But the power of web scraping doesn’t end there. In fact, we’ve written an in-depth guide on how to use web scraping to super-charge your lead generation efforts.

However, we know that not every website is built the same way. If you run into any issues during your scrape job, reach out to us at hello[at]parsehub.com or use the live chat on our homepage.

You may also be interested in reading the following:

- How to Scrape eBay Product Data: Product Details, Prices, Sellers and more.

- Web Scraper Pagination: How to Scrape Multiple Pages on a Website

- How to Scrape Website Data into Google Sheets

Want to become an expert on Web Scraping? Sign up for our Free Web Scraping Courses and become certified today.

If you require additional assistance with your email web scraping, contact our live chat support, our experts will help you out!